capAI: Internal Review Protocol

Scaling Conformity Assessments for the EU AI Act

In June 2023, the EU parliament agreed to legislate AI and is now working with member states to draft laws that ensure AI systems do not endanger people or society.

This legislation is widely known as the EU AI Act or AIA. While there is extensive literature on the Act itself and the regulations it will introduce, practical guidance is lacking on how enterprises and organisations can review their AI systems for compliance.

Conformity Assessment Procedure - cap AI

The conformity assessment procedure is outlined in the AIA (Artificial Intelligence Act) and is aimed at ensuring that AI systems operated within the EU or affecting EU citizens are trustworthy, legally compliant, technically robust, and ethically sound. However, while the AIA provides extensive discussion of the aspects and outcomes of AI systems that it seeks to prevent, it neither prescribes nor details the form of such conformity assessments. This is the gap that capAI aims to fill. Specifically, capAI seeks to aid firms required to conduct an internal conformity assessment of high-risk AI systems.

capAI is a conformity assessment procedure for AI systems that aligns with the EU's Artificial Intelligence Act (AIA). It has been authored by the team at Oxford SAID institute of management and can be read in full here.

The purpose of capAI is to provide organisations with a governance tool that ensures the development and operation of AI systems are trustworthy, legally compliant, ethically sound, and technically robust. It aims to prevent harm and build trust in AI technologies by proactively assessing high-risk AI systems.

capAI adopts a process view of AI systems and covers the five stages of the AI life cycle: design, development, evaluation, operation, and retirement. It provides practical guidance on translating high-level ethics principles into verifiable criteria for the design, development, deployment, and use of ethical AI. By following capAI, organisations can ensure that their AI systems adhere to the ethical principles set out in the EU's guidelines.

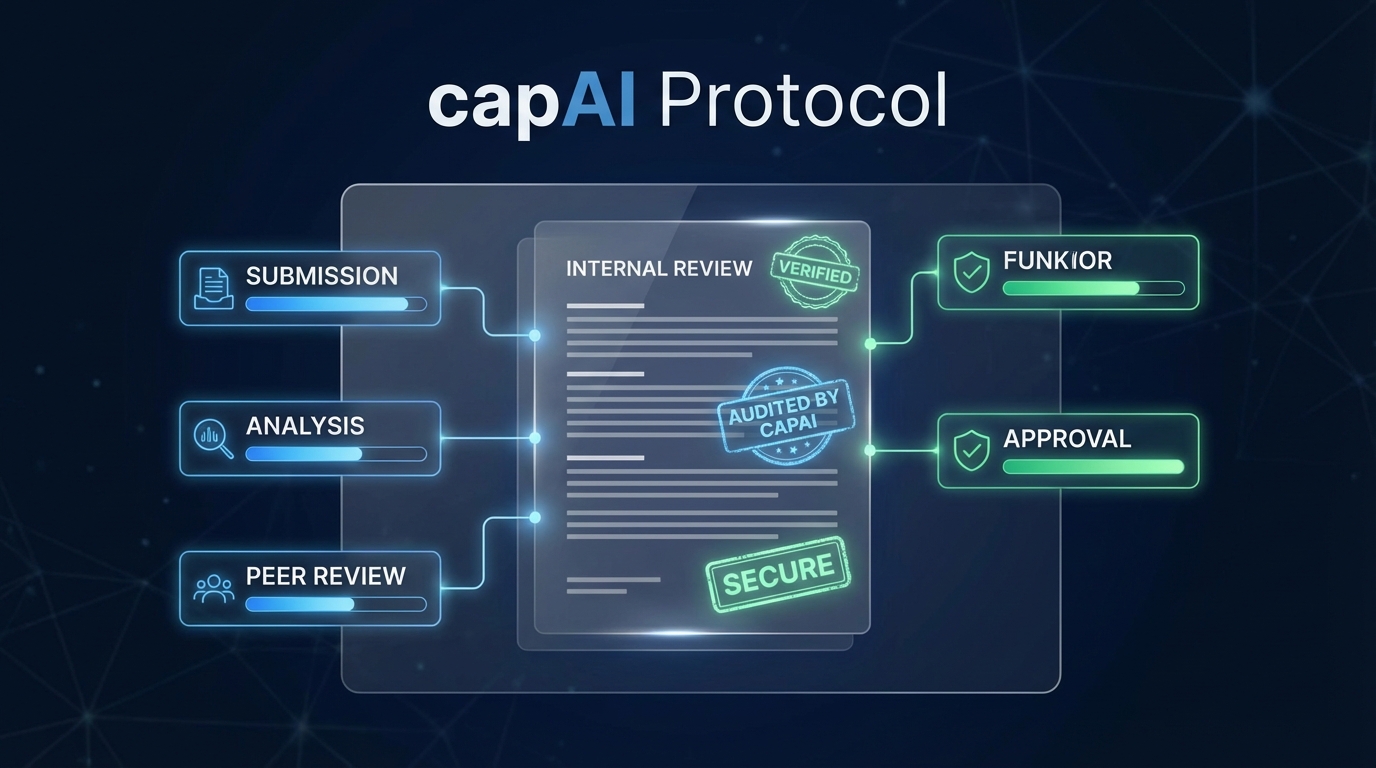

The capAI process includes three key documents: an internal review protocol (IRP), a summary datasheet (SDS), and an optional external scorecard (ESC). The IRP acts as a management tool for quality assurance and risk management, assessing the awareness, performance, and resources in place to prevent potential failures and respond to them. The SDS synthesizes key information about the AI system, including its purpose, status, and contact details. The ESC is generated through the IRP and provides an overall risk score for the AI system.

capAI is designed to promote good software development practices and prevent common ethical failures. It helps organisations validate their claims about ethical AI procedures, protect their reputation, and comply with regulatory mandates such as the AIA. While capAI provides a standardised procedure, it acknowledges that prioritising between irreconcilable normative values and anticipating all long-term consequences of AI decisions remain political and challenging tasks.

The authors of capAI emphasise that it should be viewed as an additional governance mechanism in the management toolbox, complementing existing governance mechanisms like human oversight, certification, and regulation. They also highlight the need for a plurality of actors promoting diverse EBA (Ethics by Design and Assessment) frameworks and suggest that official bodies authorise independent agencies to conduct EBA of AI systems.

EU AIA Categorisation of AI systems

The EU AIA categorizes AI systems into three main categories: prohibited, high risk, and low risk. Prohibited AI practices are banned outright and include real-time biometric systems (with some exceptions for law enforcement purposes) and social scoring algorithms. The AIA also makes provisions for AI systems that involve manipulation risks, such as chatbots or deepfakes.

High-risk AI systems are employed in specific areas listed in the AIA, such as law enforcement, critical infrastructure management, education and vocational training, employment and worker management, access to essential services, law enforcement, border control management, and administration of justice and democratic processes. These systems require a complex compliance regime for their development and operation.

Low-risk AI systems, on the other hand, do not use personal data or make predictions that directly or indirectly affect individuals. These systems are considered to have little to no perceived risk, and as such, no formal requirements are stipulated by the AIA. Examples of low-risk AI systems include industrial applications in process control or predictive maintenance.

It is important to note that the requirements stipulated in the AIA apply to all high-risk AI systems. However, the need to conduct conformity assessments only applies to standalone AI systems. For AI systems embedded in products covered by sector regulations, such as medical devices, the AIA requirements will be incorporated into existing sectoral testing and certification procedures.

Overall, the EU AIA aims to regulate AI systems based on their level of risk, with prohibited practices being banned outright, high-risk systems requiring a complex compliance regime, and low-risk systems having no formal requirements. Compliance with the AIA is the responsibility of AI system providers, users within the EU market, and providers or users of AI systems used on the EU market.

Read more about how EU AI Act is likely to impact your business.

capAI Internal Review Protocol (IRP)

The capAI process includes three key documents: an internal review protocol (IRP), a summary datasheet (SDS), and an optional external scorecard (ESC). The IRP acts as a management tool for quality assurance and risk management, assessing the awareness, performance, and resources in place to prevent potential failures and respond to them.

The stages of the internal review protocol (IRP) are as follows:

- Stage 1: Design - This stage involves defining the use case's requirements and organizational governance for the AI system. It includes setting ethical values and creating common goals and mental models.

- Stage 2: Development - In this stage, the AI system is developed, and technical documentation detailing its objectives and functionality is created. Adherence to a voluntary code of conduct is optional.

- Stage 3: Evaluation - The AI system is tested in a training environment to assess its performance and identify any potential failures. The evaluation stage also includes post-launch monitoring and logging of key events.

- Stage 4: Operation - The AI system is deployed in a production environment and maintained. Ongoing testing and monitoring are conducted to ensure its continued performance and adherence to ethical principles.

- Stage 5: Retirement - When the AI system is no longer in use, it is deactivated and retired. This stage involves the process of decommissioning the system and addressing any potential failures or issues that may arise.

These stages of the IRP cover the entire lifecycle of the AI system, from its design to its retirement. The IRP is a management tool for quality assurance and risk management, and it helps organizations assess the awareness, performance, and resources in place to prevent and respond to potential failures. The IRP is complemented by the summary datasheet (SDS) and the optional external scorecard (ESC), which provide additional documentation and information about the AI system's purpose, functionality, and performance.

If you wish to automate the IRP process, check out my blog post on AI Verify - a software developed by the Singapore IMDA to aid AI Ethics assessments. And if you do not wish to install the software, we have prepared a playground for you to test it for FREE and 100% online.

Summary Datasheet (SDS) and External Scorecard (ESC)

The SDS (Summary Datasheet) is a document that needs to be submitted to the EU's public database on high-risk AI systems. It includes information such as the provider's contact details, the AI system's trade name, its intended purpose, its status (on the market or in service), and any certificates or declarations of conformity. It also allows for the inclusion of a URL for additional information. The SDS serves as a registration requirement and provides a high-level summary of the AI system's purpose, functionality, and performance.

The ESC (External Scorecard) is a public reference document that organisations can make available to customers and other stakeholders of the AI system. It summarises relevant information about the AI system across four key dimensions: purpose, values, data, and governance. The purpose element describes the objective and functionality of the AI system, while the values element outlines the organizational values and norms that underpin its development. The data element defines the nature of the data used, whether it is public, proprietary, or private, and whether consent has been secured for its use. It also specifies if the AI system uses protected attributes. The governance element states the person responsible for the AI system, provides a point of contact for complaints or concerns, and specifies the dates of the last and next review of the AI system.

Frequently Asked Questions

- What is CAPAI?

- CAPAI (Conformity Assessment Procedure for AI) is an internal review protocol designed to help organizations govern artificial intelligence systems and perform conformity assessments aligned with the EU AI Act.

- How is CAPAI used under the EU AI Act?

- Under the EU AI Act, CAPAI provides a structured framework for organizations to document, evaluate, and monitor high-risk AI systems throughout their lifecycle to ensure compliance with regulatory requirements.

Conclusion

The EU's Artificial Intelligence Act provides a crucial framework for ensuring AI systems are trustworthy and beneficial to society. As organizations begin reviewing their AI systems for conformity, following structured procedures like capAI will be invaluable. The capAI documentation provides actionable guidance on translating high-level ethics principles into concrete best practices across the AI lifecycle. Though assessing AI's risks remains an evolving challenge, frameworks like capAI are an important step towards building ethical and robust AI that serves the public good. By proactively embracing responsible AI governance, we can develop AI that enriches our world.

We encourage you to reach out to us via the contact form if you would like support in conducting an internal review of your organisations AI systems.